How It Works

Durable Workflow uses Laravel's queued jobs and event-sourced persistence to create durable coroutines. Workflows suspend through Fiber-backed helper calls for a durable replay contract.

Runtime

A workflow is a class whose handle() method calls straight-line helpers such as activity(), await(), timer(), sideEffect(), child(), and all([...]). Each helper call suspends the workflow until the corresponding durable step completes, then resumes from where it left off with the recorded result.

Every step produces a durable history event. The engine replays that history whenever the workflow wakes up, rebuilding state from the event stream before running the next unexecuted step. That replay is what lets a workflow survive worker restarts, deployments, and machine failures without losing its place.

WorkflowStub::make() reserves a public workflow instance id. Starting the workflow creates the first run and the first workflow task. Each run has its own run id; operations such as signal(), cancel(), and terminate() target the current instance run.

Event Sourcing

Event sourcing builds up the current state from a sequence of saved events rather than saving the state directly. This has several benefits: it provides a complete history of the execution events, and it can be used to resume a workflow if the worker crashes.

Coroutines

Coroutines are functions whose execution can be suspended and resumed. Durable suspension points are expressed as straight-line Fiber-backed helper calls such as activity(), await(), timer(), and sideEffect().

User workflow code lives in handle(), which is an ordinary method that calls those helpers directly. The runtime first checks whether the step already completed durably. If so, the cached result is replayed from history instead of running the step a second time. Otherwise, the runtime queues the next activity, timer, or child work and suspends until that durable step completes or fails.

Activities

By calling multiple activities, a workflow can orchestrate the results between each of them. The execution of the workflow and the durable steps it schedules are interleaved: the workflow reaches an activity call, suspends until that activity completes, and then continues execution from where it left off.

If a workflow fails, the events leading up to the failure are replayed to rebuild the current state. This allows the workflow to pick up where it left off, with the same inputs and outputs as before, ensuring determinism.

Activities are always durable queued work in v2. There is no in-process local

activity fast path. Ordinary activities can run on any compatible worker, and

worker sessions add an explicit lease when a

sequence of activity steps needs the same worker-local resource. If you need a

replay-safe one-shot value without queueing an activity, use

sideEffect(...). For the full contract, see

Activity Execution Model.

Execution Guarantees

Workflow code and activity code have different repeat-execution semantics:

- Workflow code is replayed. Re-delivering a workflow task rebuilds state from durable history and re-runs deterministic authoring code. Replay does not re-run external side effects.

- Activities are at-least-once queued work. A logical activity may be retried, redelivered after lease expiry, or re-observed after worker loss. Duplicate delivery is a normal distributed-systems condition, not a bug by itself.

- Activity identity is durable.

activity_execution_ididentifies one logical activity execution across retries and redelivery, whileactivity_attempt_ididentifies an individual try. Useactivity_execution_idas the default remote idempotency key; useactivity_attempt_idonly when a downstream system needs per-attempt correlation.

See Execution Guarantees and Idempotency, Activity Execution Model, and Failures and Recovery for the full v2 contract.

Queues

Queued jobs are background processes that run at a later time. Laravel supports queues via Amazon SQS, Redis, or a relational database. Workflows and activities are both queued jobs, but they behave a little differently. A workflow is dispatched multiple times during normal operation: it runs, dispatches one or more activities, and then exits until the activities complete. An activity is an at-least-once queued task: the common case is one successful attempt, but retry, lease expiry, or worker loss can cause the same logical activity execution to be delivered more than once.

Example

use Workflow\V2\Workflow;

use function Workflow\V2\{activity, all};

class MyWorkflow extends Workflow

{

public function handle(): array

{

return [

activity(TestActivity::class),

activity(TestOtherActivity::class),

fn () => all([

fn () => activity(TestParallelActivity::class),

fn () => activity(TestParallelOtherActivity::class),

]),

];

}

}

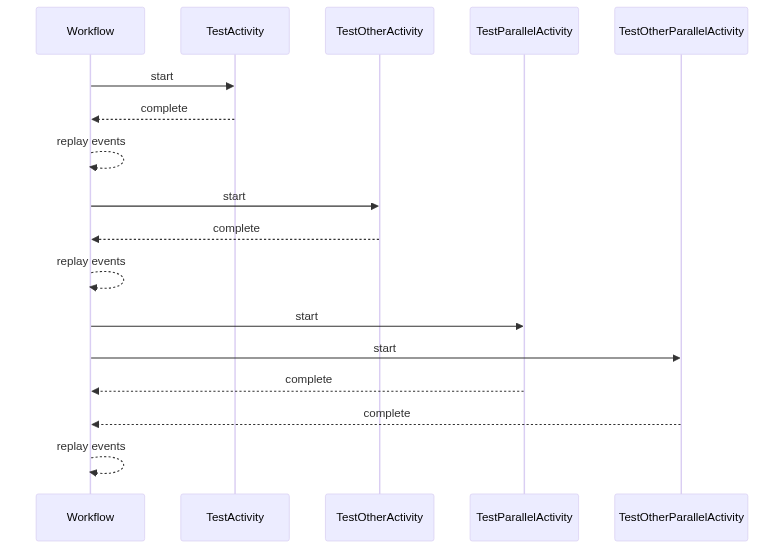

Sequence Diagram

This sequence diagram shows how a workflow progresses through a series of activities, both serial and parallel.

- The workflow starts by getting dispatched as a queued job.

- The first activity,

TestActivity, is then dispatched as a queued job. The workflow job then exits. OnceTestActivityhas completed, it saves the result to the database and returns control to the workflow by dispatching it again. - At this point, the workflow enters the event sourcing replay loop. This is where it goes back to the database and looks at the event stream to rebuild the current state. This is necessary because the workflow is not a long running process. The workflow exits while any activities are running and then is dispatched again after completion.

- Once the event stream has been replayed, the workflow continues to the next activity,

TestOtherActivity, and starts it by dispatching it as a queued job. Again, onceTestOtherActivityhas completed, it saves the result to the database and returns control to the workflow by dispatching it as a queued job. - The workflow then enters the event sourcing replay loop again, rebuilding the current state from the event stream.

- Next, the workflow starts two parallel activities,

TestParallelActivityandTestOtherParallelActivity. Both activities are dispatched. Once they have completed, they save the results to the database and return control to the workflow. - Finally, the workflow enters the event sourcing replay loop one last time to rebuild the current state from the event stream. This completes the execution of the workflow.

Determinism

Because history is replayed on every wake-up, workflow code must produce the same commands given the same history. Read Constraints for the authoring rules and the helpers Durable Workflow exposes (Workflow\now(), sideEffect(), getVersion(), and similar) for situations where code would otherwise be non-deterministic.